What to do when “Research Shows” shuts you down

Anna Stokke: A guide for parents and teachers on spotting and stopping the spread of bad ideas in education

Chalk & Talk is one of our favorite sources for education content, and so we’re thrilled to have this guest post from Anna Stokke, based on a presentation she gave at researchED Toronto in June, 2025. You can listen to Anna talk about this article on her most recent Chalk & Talk episode, now available here!

The Schoolhouse is a series from Education Progress featuring articles for and from teachers, parents, education officials, and others working in the education system.

People often ask me how I became involved in math education, and why I so often call out poor practice and insist on evidence. As with many of us, it’s personal.

We sent our daughter to school expecting she’d be taught math. After all, that’s what schools do: they teach kids how to read and do math. But our daughter didn’t seem to be learning anything in her math class. By Grade 3, most days either consisted of a “problem of the day” that students didn’t have the skills to solve, or confusing lessons on convoluted methods for doing basic arithmetic.

It all came to a head when we were invited to a parent math information night. “What should math look and feel like?” the flyer asked. “How do we help children see that math is a subject where thinking, not just remembering, is the main event?”

Who could be against thinking? Certainly not me. But if I’d known then what I know now, I’d have recognized this as code for “no remembering at all.” My husband and I walked to the school that evening hopeful that those first few months of Grade 3 were an anomaly. Maybe soon they’d start teaching some math.

Instead, the parent math night deepened our concerns. We were told that the new math curriculum (or standards, as they’re called in the United States) discouraged standard algorithms — the traditional vertical algorithms for arithmetic — in favor of invented strategies or less efficient, overcomplicated procedures. We were assured this approach promotes “conceptual understanding” but, as mathematicians, my husband and I were skeptical. To reinforce the message, we were given a research paper that supposedly showed that standard algorithms are harmful, claims that often trace back to the widely criticized work of Constance Kamii (see critiques here and here).

Looking around the room, most parents seemed satisfied. Who wouldn’t trust the schools to teach our children well?

Some weeks later, the school brought in a well-known Canadian math consultant and author to give a presentation to parents. When asked directly, she gave parents the advice that it doesn’t really matter whether kids commit multiplication tables to memory, a claim that runs counter to strong evidence (see, for example, here and here). It became clear where those “problems of the day” were coming from.

As for the research paper we’d been given, it was a small case study involving children with learning difficulties. There was no control group and no statistical analysis. The researcher drew faulty conclusions that did not follow from the evidence. It didn’t support what the school was telling us at all.

This was my first encounter with education research, and I wasn’t impressed. How could flawed studies and non-existent evidence shape how children were being taught math? And why was no one asking questions?

Our daughter’s classroom wasn’t an outlier. The same patterns were playing out in classrooms across the country. That moment set me on a path that I’m still on today to push for better standards in math education. Over time, I’ve learned to read between the lines, to ask pointed questions, to look closely at what’s presented as evidence, and to never take education claims at face value.

I’d like to share what I’ve learned, in the hope that it helps other parents and teachers.

The first thing to understand is that the phrase “research shows” is used loosely in education. It doesn’t carry the same weight as when a doctor or scientist uses the phrase. In education, it might refer to a blog post, an opinion dressed up as evidence, or a small, low-quality study. Even a published journal article in education should be scrutinized. A surprising amount of education research is of very low quality.

But the phrase is powerful and persuasive. It makes opinion sound like fact and lends authority to claims that haven’t been properly tested. Yet when a claim is repeated enough, it starts to feel like established truth.

I call this the wildfire effect.

The wildfire effect: How bad ideas spread

A flawed study or opinion piece is cited by an influential educator.

It’s repeated at education conferences, professional development sessions, and on social media.

It appears in district documents, books, and other education papers.

It then gets cited as well-established research.

It becomes justification for education policy.

At no point in the process is the evidence seriously examined.

A good example is the claim that timed tests cause math anxiety. This is not supported by high-quality research, and most claims seem to trace back to an opinion piece written by influential math educator, Jo Boaler. It has been repeated so many times that many educators believe it is accepted research. However, these assumptions are not supported by research, and recommendations from the Institute of Education Sciences (IES) list timed activities as a research-informed way to support students struggling with math.

What’s at stake? A lot.

When weak or non-existent evidence drives decisions, students don’t get effective instruction, struggling students fall further behind, teachers are misled, resources are wasted, and high-quality research gets drowned out.

For this reason, I believe more teachers and parents need to be proactive. A PhD, a position of influence, or a published book is not proof of accuracy. Anyone can write a book, and people with PhDs can be wrong. Evidence is what matters, not credentials.

Ask for evidence, evaluate it, and become informed. Here’s where to start.

Step 1. Ask for evidence

When you ask for evidence, you may encounter tactics designed to shut you down.

One common tactic is shifting the burden of proof: when someone makes a radical claim but refuses to provide evidence, instead throwing the burden of proof onto you.

Here’s an example:

Claim: “Research shows standard algorithms are harmful.”

You: “Please provide evidence of your claim.”

Response: “You need to prove they’re not harmful.”

Who holds the burden of proof? As Carl Sagan said, “Extraordinary claims require extraordinary evidence.” The burden of proof lies with the person making the claim, especially when it challenges established practice. In this case, stating that standard algorithms are harmful is a radical claim that goes against conventional wisdom. It is therefore incumbent on the individual making that claim to provide evidence.

Another tactic to watch out for is the firehose effect: avoiding providing evidence by overwhelming the questioner with sources. I’ve experienced this firsthand, repeatedly. When I asked for evidence, I’d be told to read a book with hundreds of references. The reason is simple: it’s impractical to check the validity of hundreds of references.

The best example I know of someone pushing back against the wildfire effect is from Stanford math professor Brian Conrad. Alarmed by dubious claims in a 1000-page draft of the 2021 California Math Framework (CMF), he carefully examined every claim and reference. He found repeated citation misrepresentations, non peer-reviewed articles, and sweeping generalizations, which he documented in a public critique of the CMF.

Most people won’t do what Conrad did, but there are some things you can do to dampen the firehose effect. First, be specific. Ask for two or three high-quality studies — not entire books — on the specific topic in question. You can also divide large reference lists among several people. My colleagues and I did this recently when an education professor claimed that requiring K-8 teachers to take math made them worse math teachers. When asked for evidence she sent 22 articles. We split them up, read every one, and wrote a report on our findings. None of the provided articles supported her claim, and several contradicted it.

A third tactic you might encounter is credential deflection: instead of providing you with the requested evidence, someone questions your right to ask for it. I’ve experienced this directly. A contract instructor in a Faculty of Education once publicly wrote this about me: “Let me stress that her perspective as a mathematician is far different than that of a math educator. Many of the statements she makes are a reflection of her lack of knowledge regarding effective practice.” This is an ad hominem attack: criticizing the person instead of engaging with the argument. He was implicitly saying that only people trained in education are qualified to evaluate evidence within that field — as though there’s something special about education research that the rest of us can’t understand.

But this is preposterous. A mathematician is often in an even better position to identify weak methodology in education papers, such as missing control groups, flawed statistical analyses, or illogical conclusions. Honestly, critical thinking skills are often all that’s needed to assess the validity of many education papers. If someone attacks your credentials, direct them back to the critical question: please provide evidence for your claim.

The fourth tactic to guard against is gaslighting: when someone tells you that a poor practice you’ve witnessed is barely happening in schools. This tactic is used to shut down conversations before they can start. For instance, I’ve been told that inquiry-based instruction is rare and that most classrooms are dominated by direct instruction: the practice is hardly occurring, so why dwell on its effectiveness? A simple response is to provide evidence to the contrary, which means the best defense is having receipts. Professional development and school newsletters reflect how teachers are being encouraged to teach. What professional development is being offered? How often does it focus on explicit instruction, retrieval practice, acquiring fluency with basic math facts, or direct teaching of critical math skills versus Building Thinking Classrooms, growth mindset, or inquiry? These kinds of school and district resources offer a paper trail that can’t be easily gaslit.

Step 2. Evaluate the evidence

If you do receive research articles (which I’ve found is unlikely, particularly when the claim runs counter to common sense), you’ll need to assess them. First, here’s what doesn’t count as evidence:

Opinion pieces

Newspaper or magazine articles

Articles that are not peer-reviewed

Position statements: the NCTM (National Council for Teachers of Mathematics), for example, has published position statements that are not grounded in evidence.

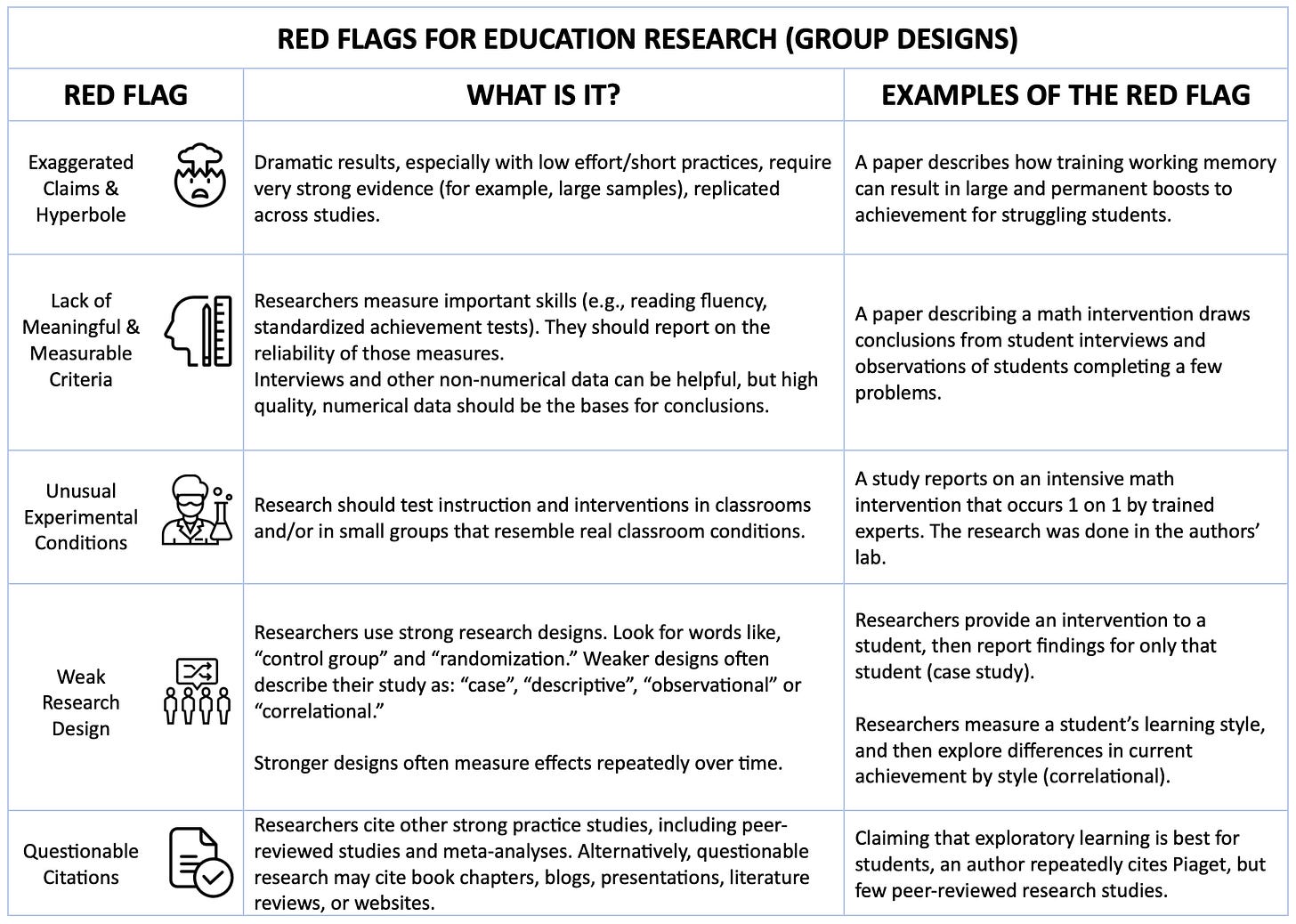

Next, watch for these five red flags, discussed in detail here:

One common issue, often found in education articles, is the lack of meaningful and measurable criteria.

Math education is full of appealing but vague terms: critical thinking, conceptual understanding, number sense, curiosity, differentiation. These lack clear definitions and are difficult to measure. If you are told that a program promotes number sense or critical thinking, that’s a red flag. There really is no standard definition for what these terms mean, making them impossible to measure.

Another thing to watch out for is when programs get labeled as research-based, but the underlying studies didn’t actually measure whether students learned. For example, the popular math program, Building Thinking Classrooms, is often described this way, but the study often cited measured engagement, not whether students learned math (see critiques here and here). Engagement isn’t learning. Students can be very engaged but learn very little.

If a math program claims to be evidence-based, it should be supported by high-quality research that measured whether students learned math.

Step 3. Become informed

Finally, the best defence against bad ideas in education is to equip yourself with knowledge about evidence-informed practices. High-quality sources include the Institute of Educational Science practice guides, the National Math Advisory Panel Final Report, the National Center on Intensive Intervention, and the Education Endowment Foundation. These sources synthesize rigorous research and focus on what improves student outcomes. The more you know about what the best research supports, the easier it is to spot bad ideas before they spread. And check out my podcast Chalk & Talk, where I speak with experts from around the world about evidence-based education.

Our daughters have mathematicians as parents, so they got the math instruction they needed. Most children don’t have that advantage. If we want better outcomes for children, we must stop accepting “research shows” at face value and start demanding evidence.